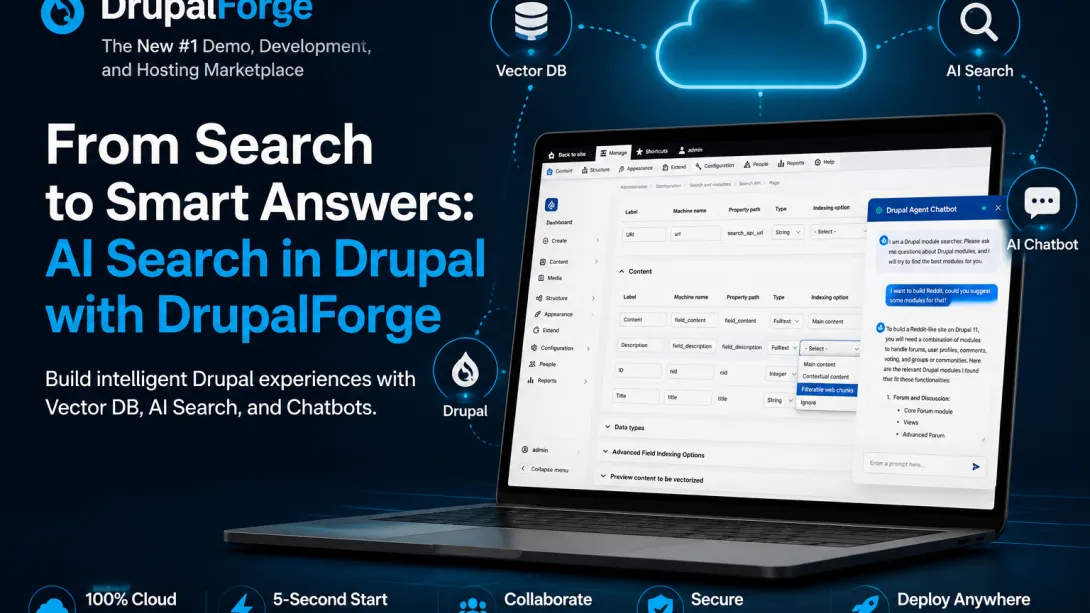

How DrupalForge Makes AI Search and Vector Databases Easier to Demo in Drupal

AI in Drupal is no longer just about adding a chatbot to a website.

The bigger opportunity is helping Drupal understand content, search by meaning, and return useful answers based on what a user is actually trying to do.

In a recent Drupal AI session, Marcus Johansson and Sal Lakhani walked through a working DrupalForge demo showing how Drupal, AI Search, vector databases, and RAG can come together inside a real Drupal environment.

The demo is powerful because it does not present AI as a vague concept. It shows a complete working use case: a Drupal site with thousands of Drupal module records indexed into a vector database, connected to an AI assistant, and made searchable through semantic search.

That matters because AI becomes valuable when people can actually see it working.

The Problem: Traditional Search Is Not Enough

Most Drupal sites still rely heavily on keyword search. Keyword search works when the user knows the exact word, phrase, or module name they are looking for.

For example, if someone searches for “ECA,” a traditional search system can return results that include that exact term.

But what happens when the user does not know the right module name? What if they ask something like: “I want to build something like Reddit. What Drupal modules should I use?”

A traditional search system may look for the word “Reddit” and return Reddit-related integrations, API connectors, or unrelated content. But that is not what the user really meant. The user is asking:

How do I build community discussions?

How do I support comments?

How do I manage moderation?

How do I create user profiles?

How do I support social login?

How do I structure a community platform?

That is where AI Search and vector databases become useful. Instead of only matching words, AI Search can help Drupal search by meaning.

What the DrupalForge Demo Shows

The demo uses a pre-indexed database of roughly 6,000 Drupal modules. Each module includes information such as:

Module description

AI-generated summary

Possible use cases

Affordances, or ways the module could be used

Additional metadata

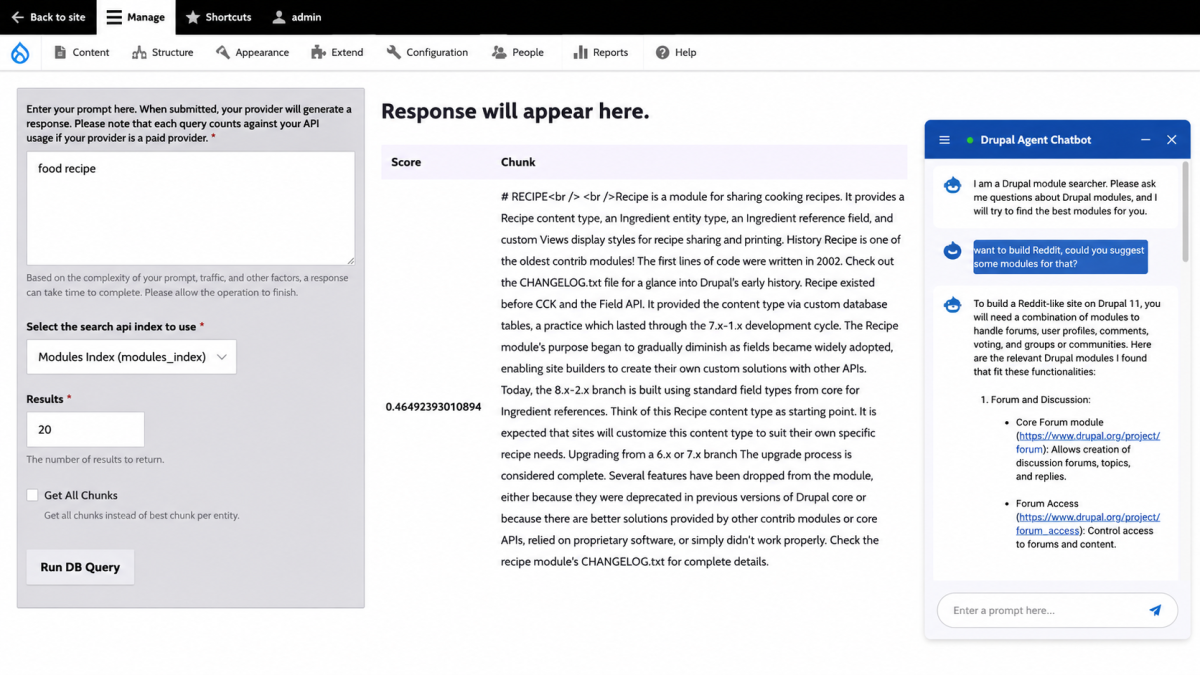

This content is indexed into a vector database. That allows the system to compare the meaning of a user’s question against the meaning of the indexed module content.

So instead of only asking: “Which modules contain this exact keyword?” The system can ask: “Which modules are closest to what this person is trying to accomplish?”

That is the key difference. Traditional search looks for matching words. AI Search looks for matching meaning.

What Is Vector Search?

Vector search works by converting text into mathematical representations called embeddings.

When content is indexed, the system breaks larger content into smaller pieces called chunks. Each chunk is converted into a vector. When a user asks a question, that question is also converted into a vector.

The vector database then compares the question vector with the stored content vectors and returns the closest matches. This is why semantic search can understand that phrases like “good module” and “great plugin” may be related, even if the exact words are different.

For Drupal websites, this can improve search across:

Documentation and Knowledge bases

Support articles and Product catalogs

Higher education and Government websites

Large nonprofit websites and Enterprise intranets

Why Chunking Matters

One important technical detail from the demo is chunking. If the indexed content is too large, the system may struggle to find precise semantic matches. If the chunks are too small, the system may lose important context.

For Drupal module records, larger chunks may be better because each module is already a compact unit of information. In that case, the goal is often to return relevant modules, not tiny snippets from the same module.

The demo also uses contextual content. This means certain fields, such as the title, can be included with every chunk to give the AI more context. AI Search quality depends heavily on how the content is prepared before it ever reaches the model.

Naive RAG vs. Agentic RAG

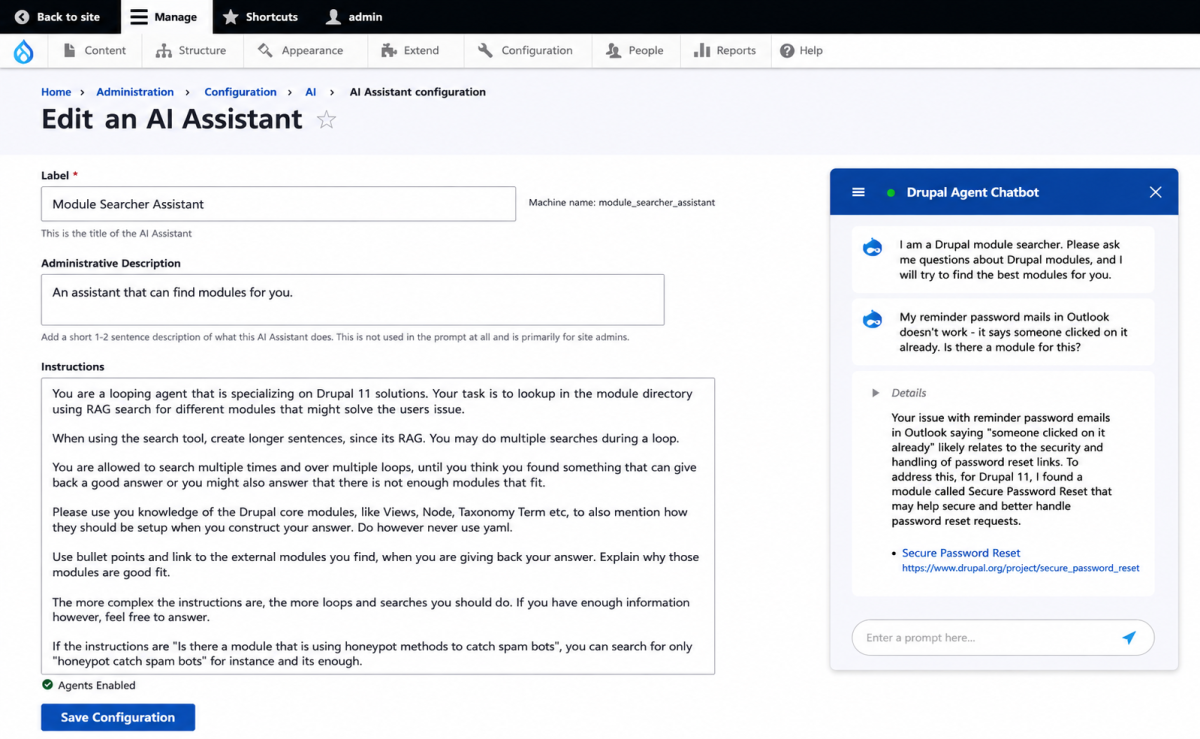

The session also explains an important distinction between naive RAG and agentic RAG. RAG stands for Retrieval-Augmented Generation. In simple terms, it means the AI retrieves relevant information first, then uses that information to generate a better answer.

Naive RAG is the simpler version. A user asks a question, the system searches for that exact question, and sends the results to the AI model.

Agentic RAG tries to understand the intent behind the request. For the Reddit example, it may reason that the user needs forums, comments, moderation, and voting. Then it can search for those concepts, evaluate the results, and return a more useful answer. This is why agentic search feels much smarter; it interprets the goal behind the question.

Why Thresholds Matter

When vector search returns results, each result has a score representing how closely the result matches the user’s query.

The system needs to decide which results are relevant enough to pass to the AI assistant.

If the threshold is too low, the AI may receive too many weak or irrelevant results.

If the threshold is too high, the system may miss useful information.

Better AI does not come from feeding the model everything. Better AI comes from giving the model the right context.

How the Demo Was Set Up

The demo uses PostgreSQL with pgvector as the vector database. The module data is indexed ahead of time. This is a critical architectural decision: without pre-indexing, every new demo environment would need to recreate embeddings, consuming time and AI credits.

By pre-indexing the content, DrupalForge can launch the AI demo much faster. Complex AI infrastructure can be packaged into a launchable environment so users can experience the value immediately. Instead of spending hours setting up infrastructure, users can start with a working demo.

From Drupal Search API to AI Search

The workflow shown in the session builds on familiar Drupal Search API concepts:

Set up a vector database.

Connect Drupal to that database.

Configure the AI Search server.

Create a Search API index.

Choose content fields and indexing rules.

Connect the index to a chatbot or enhanced search experience.

AI Search does not throw away the Drupal way of doing things. It extends it.

Chatbot vs. Smarter Search

AI Search does not always need to become a chatbot. Chatbots are exciting but require more production hardening regarding hallucinations, tone, and privacy.

A semantic search upgrade can be safer and easier to deploy. Instead of placing an LLM directly in front of every user answer, you can use vector search to improve traditional search results. A chatbot is a user-facing AI product; semantic search is an infrastructure improvement.

Cost and Model Considerations

Agentic RAG can cost more than simple search because the AI may perform multiple searches. Additionally, embeddings are not universal. If content is indexed using one embedding provider, that same provider must be used when querying that content.

Regarding privacy, organizations may prefer options such as Azure-hosted OpenAI models, Google Vertex AI, or self-hosted open-source models (like Mistral) depending on the sensitivity of the data.

Why DrupalForge Matters for Drupal AI

The hardest part of adopting AI is not always the model—it is the setup. DrupalForge changes the experience. Instead of telling someone the steps to configure AI Search, DrupalForge provides a working environment where the demo is already assembled.

DrupalForge makes advanced Drupal AI easier to demonstrate, easier to teach, and easier to understand.

Conclusion

The future of Drupal AI is making Drupal content more understandable, searchable, and useful. This DrupalForge demo shows what that future can look like: Drupal content indexed into a vector database, enhanced with RAG, and exposed through smarter interfaces.

For developers, it is a practical pattern. For agencies, it is a client demo engine. And for the Drupal community, it is a clear example of how Drupal can remain a powerful, flexible, and modern platform in the AI era.

DrupalForge: The New #1 Demo, Development, and Hosting Marketplace for Drupal.